Authors:

(1) EASON CHEN, Carnegie Mellon University and Bucket Protocol;

(2) RAY HUANG, Bucket Protocol;

(3) JUSTA LIANG, Bucket Protocol;

(4) DAMIEN CHEN, Bucket Protocol;

(5) PIERCE HUNG, Bucket Protocol.

Table of Links

Introduction & GPTutor Service Design

Customize Prompts, Future Works, Call for Contribution, Conclusion, Acknowledgements, & References

3. CUSTOMIZE PROMPTS

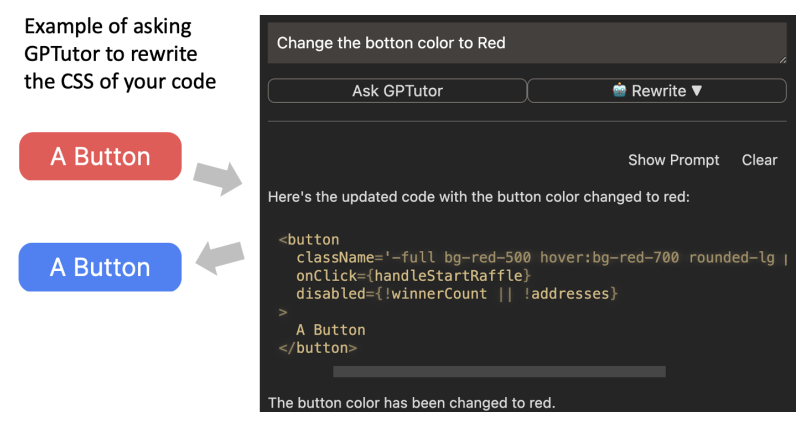

With GPTutor, users can customize their prompts for various programming languages and development scenarios and easily switch between different prompts as needed (Figure 4). Leveraging the capabilities of GPT-3.5 and GPT-4, GPTutor offers support for customized Prompts in LangChain Template format. Notably, GPTutor not only supports inputting code from the active window as the prompt, but it can also pick the source code behind the function chosen by the user as a prompt. By these kinds of in-depth analyses, GPTutor can generate even more precise outputs than using vanilla ChatGPT and Copilot[4].

Please check this demo video for functions of GPTutor. While utilizing GPTutor, the prompts it employs are completely transparent. Users can easily view the current prompt with a single click and instantly edit to see how different prompts can produce varying output. If users are satisfied with the changes, they can save the modified prompt in the configuration as personalized prompts. Finally, users are encouraged to submit Pull Requests to share the prompts they’ve used to improve the overall GPTutor Community.

We have specifically tailored GPTutor’s prompts to enhance its ability to explain and generate Sui-Move. This customization is designed to aid developers in swiftly comprehending Sui-Move development and serves as an example of how to tailor prompts for a particular programming language. For example, as shown in Figure 3, developers can set up prompts to use GPTutor on Sui Sui-Move, a language beyond ChatGPT’s training data, to explain Sui-Move, generate comments for Sui-Move code, and even perform code review for their Sui-Move smart contract. Moreover, by including the Sui-Move Fungible Coin Smart Contract Template in the prompt as a reference, GPTutor can accurately generate and modify Sui-Move smart contracts code related to Fungible Coins. This is aimed at helping developers understand the workings of Sui-Move contracts and expedite the development of their first Fungible Coin smart contract.

4. FUTURE WORKS

In the future, we hope to further enhance GPTutor’s capabilities with prompt engineering, enabling it to support a wider range of contexts and even allowing it to switch prompts autonomously based on the context. Additionally, we aim to improve the user experience when it comes to editing prompts in GPTutor, such as generating multiple responses [2] to help users compare which prompt works best [6]. Moreover, we’re also interested in employing NLP Factors to identify the most appropriate explanations and recommendations [3]. Finally, we also intend to conduct practical research on GPTutor’s Tutur capabilities, exploring how GPTutor can assist developers in efficiently adopting emerging technologies, such as assisting in the conversion of natural language documents to domain-specific language [5, 8].

5. CALL FOR CONTRIBUTION

GPTutor welcomes contributions for custom prompts, especially those related to new programming languages or libraries. You’re more than welcome to submit Issues and Pull Requests on GitHub https://github.com/GPTutor/gptutor-extension.

6. CONCLUSION

In this paper, we discuss the limitations of using AI Paired Programming with LLMs for development and explanation. We then introduce GPTutor, a tool that allows users to customize prompts to address this issue. We outline the current progress of GPTutor and future development plans.

ACKNOWLEDGMENTS

This work was supported by the Sui Foundation. This work is presented at the Workshop of Supporting User Engagement in Testing, Auditing, and Contesting AI at The 26th ACM Conference On Computer-Supported Cooperative Work And Social Computing. Funding to attend the conference was provided by the CMU GSA/Provost Conference Funding.

REFERENCES

[1] Tom Brown, Benjamin Mann, Nick Ryder, Melanie Subbiah, Jared D Kaplan, Prafulla Dhariwal, Arvind Neelakantan, Pranav Shyam, Girish Sastry, Amanda Askell, et al. 2020. Language models are few-shot learners. Advances in neural information processing systems 33 (2020), 1877–1901.

[2] Eason Chen. 2022. The Effect of Multiple Replies for Natural Language Generation Chatbots. In CHI Conference on Human Factors in Computing Systems Extended Abstracts. 1–5.

[3] Eason Chen. 2023. Which Factors Predict the Chat Experience of a Natural Language Generation Dialogue Service?. In Extended Abstracts of the 2023 CHI Conference on Human Factors in Computing Systems. 1–6.

[4] Eason Chen, Ray Huang, Han-Shin Chen, Yuen-Hsien Tseng, and Liang-Yi Li. 2023. GPTutor: a ChatGPT-powered programming tool for code explanation. arXiv preprint arXiv:2305.01863 (2023).

[5] Eason Chen, Niall Roche, Yuen-Hsien Tseng, Walter Hernandez, Jiangbo Shangguan, and Alastair Moore. 2023. Conversion of Legal Agreements into Smart Legal Contracts using NLP. In Companion Proceedings of the ACM Web Conference 2023. 1112–1118.

[6] Eason Chen and Yuen-Hsien Tseng. 2022. A Decision Model for Designing NLP Applications. In Companion Proceedings of the Web Conference 2022. 1206–1210.

[7] Juan Cruz-Benito, Sanjay Vishwakarma, Francisco Martin-Fernandez, and Ismael Faro. 2021. Automated source code generation and auto-completion using deep learning: Comparing and discussing current language model-related approaches. AI 2, 1 (2021), 1–16.

[8] Niall Roche, Walter Hernandez, Eason Chen, Jérôme Siméon, and Dan Selman. 2021. Ergo–a programming language for Smart Legal Contracts. arXiv preprint arXiv:2112.07064 (2021).

[9] Priyan Vaithilingam, Tianyi Zhang, and Elena L Glassman. 2022. Expectation vs. experience: Evaluating the usability of code generation tools powered by large language models. In Chi conference on human factors in computing systems extended abstracts. 1–7.

Authors:

(1) EASON CHEN, Carnegie Mellon University and Bucket Protocol;

(2) RAY HUANG, Bucket Protocol;

(3) JUSTA LIANG, Bucket Protocol;

(4) DAMIEN CHEN, Bucket Protocol;

(5) PIERCE HUNG, Bucket Protocol.

This paper is